Research

Kakenhi

Grants-in-Aid for Scientific Research (Kakenhi)

Venturing into a wide range of basic to applied research

Kakenhi are funds that provide broad support for scientific research based on the free ideas of the researchers themselves, and covers a wide range of academic studies spanning from basic to applied research. Both faculty members and researchers actively apply to Kakenhi for grants, and many are approved. The grants obtained from Kakenhi are also distributed to researchers in other institutions (co-investigators) for collaborative research work.

Similarly, many NII faculty members also participate as co-investigators in the Kakenhi-funded projects of researchers at other institutions.

Applications Accepted(FY2023)

| No. of applications accepted | Amount (in thousands of yen) |

|

| Project Leader (Principal Investigator) |

64 | 393,048 |

| Co-investigator (Other institutions → NII) |

52 | 56,302 |

[Model Cases of Research Funded by Kakenhi]

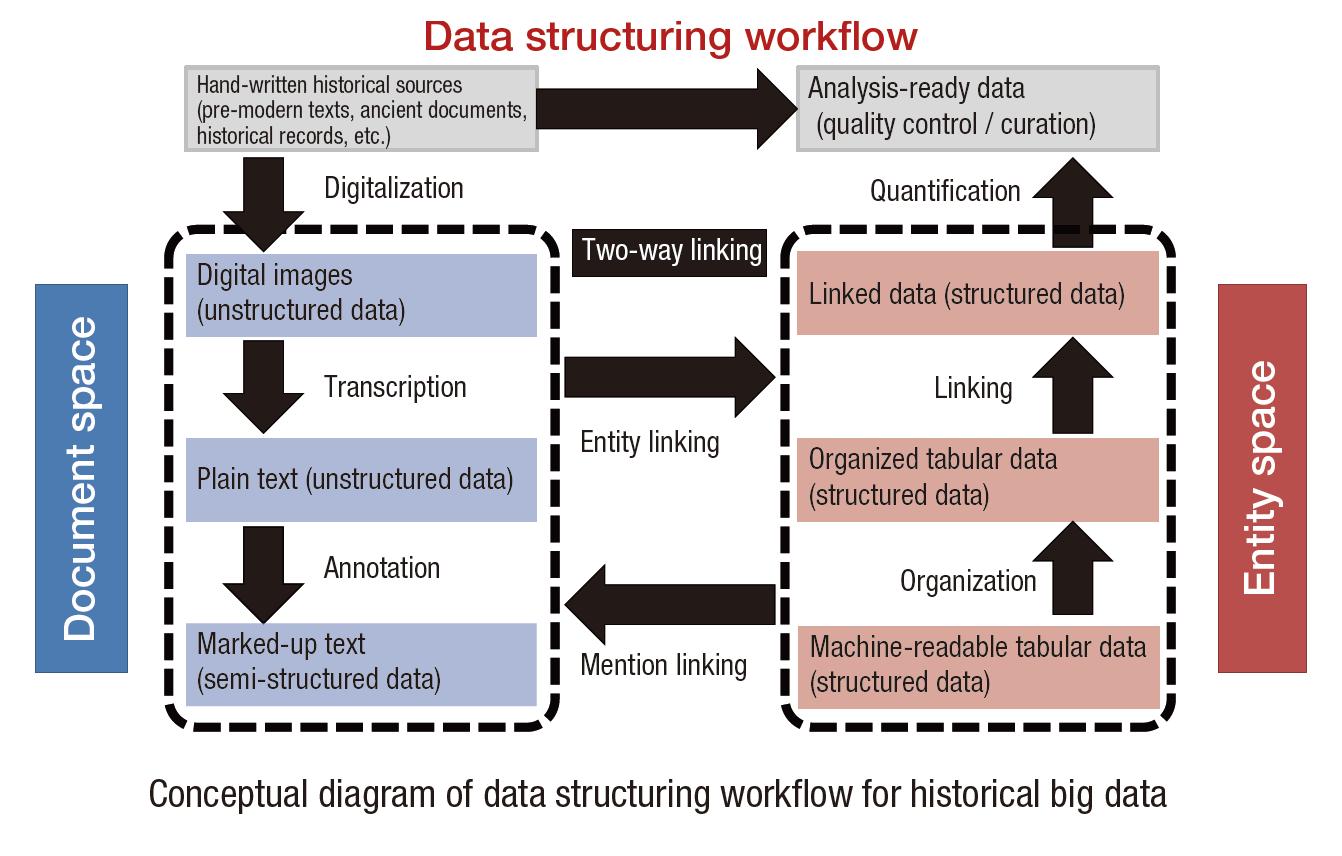

Historical Big Data: A Multidisciplinary Research Platform for Connecting Historical Sources and Data-Driven Models

Grant-in-Aid for Scientific Research (A)

Principal investigator: KITAMOTO Asanobu

Professor, Digital Content and Media Sciences Research Division

Modern big data research stems from a data-driven approach, whereby the world is reconstructed and analyzed based on the large-scale collection and integration of data. The goal of historical big data research is to extend this approach to the past to reconstruct and analyze the world of the past in cyberspace. This research project focuses on a data structuring workflow for end-to-end integration of historical documents and applications that explore the past using data-driven models. We aim to improve the efficiency and quality of the data structuring workflow by applying cutting-edge techniques. We plan to build a historical big data research infrastructure to collect large amounts of historical records for various applications, such as climatological or seismological records, and analyze them with data-driven models. The role of computer scientists is to build an open multidisciplinary research platform to bring together humanities scholars interpreting historical sources and domain experts specializing in data-driven models. This research paves the way for modern social issues to be addressed based on knowledge of the past.

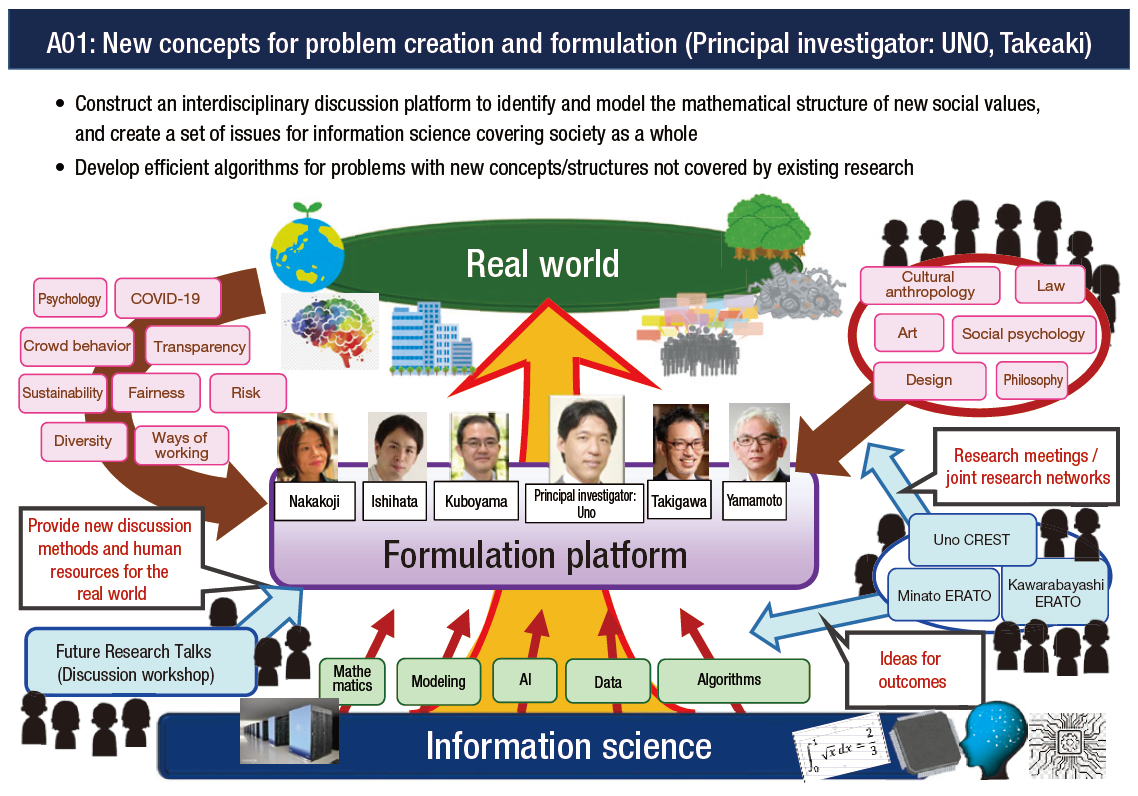

New Problem Formulation on Next Generation Informatics and Researches on their Algorithms

Grant-in-Aid for Transformative Research Areas (A)

Principal investigator: UNO, Takeaki

Professor, Principles of Informatics Research Division

Many of the fundamental problems currently considered in computer science were conceived forty or fifty years ago, and their overall structures have not changed. The world, however, has changed significantly since then, and we have new ways of understanding the structure of society and the human mind, such as social psychology.

Additionally, many cases have emerged that cannot be handled by these fundamental problems. One example is the panic buying of toilet paper during the COVID-19 pandemic, which started by broadcasting the fact that a SNS post alerting short of papers was actually fake. This is an example of how social issues cannot be solved efficiently even if computer science problems of detecting fake news and rapid information broadcasting were solved, where these problems are considered to be emergent problems to solve recent social problems. In social psychology, this is referred to as a panic state: where people think "I am calm, but I don' t know what the person next to me will do."

This suggests that hoax prevention should be researched from a completely different perspective. In this research project, researchers in informatics and other fields including humanities, natural sciences and mathematics will engage in deep discussions by exchanging concepts, ways of thinking, and values. We will come up with a catalogue of issues that informatics should tackle and ways to address them. In order for difficult interdisciplinary discussions to be held in an effective way, we will also develop a methodology for such discussions between researchers from different fields that includes ways of thinking, communicating, listening, managing discussions, and building spaces.

Graph Algorithms and Optimization: Theory and Scalable Algorithms

Grant-in-Aid for Scientific Research (S)

Principal Investigator: Professor, Principles of Informatics Research Division,

KAWARABAYASHI, Ken-ichi

In recent years, as what is called the "fourth paradigm" of (data-intensive) scientific discovery has emerged, information processing technology has become indispensable to almost every field of science. Given that algorithms are the driving force of high-performance processing, their study is more vital than ever. For example, current algorithmic innovations in information retrieval, privacy protection, and other areas are fueling the creation of national-scale businesses. This study utilizes mathematical tools to enrich and strengthen the theoretical foundations of algorithms (especially graph algorithms), to try to increase the speed and scalability of algorithms.

There are three main research topics:

1. Structure analysis in discrete mathematics and graph algorithms

2. Online algorithm development and its application to machine learning

3. Application of algorithmic techniques to machine learning

Improving Security and Performance of Network-On-Chip Using Approximate Computing

Grant-in-Aid for Scientific Research (B)

Principal investigator: KOIBUCHI Michihiro

Professor, Information Systems Architecture Science Research Division

One of the goals of Society 5.0 is to achieve "a sustainable and resilient society that ensures the safety and security of citizens." To make this a reality, we need semiconductor chips with zero trust security. This research explores network-on-chip technology to prevent information leakage and computation interference. Specifically, to prevent information leakage by hardware Trojans, identity theft, DoS (Denial of Service) attacks, and side channel attacks, we will investigate (1) information hiding using approximate computing techniques of voltage overscaling and lossy compression and (2) application-level anti-tamper technology using altered data for calculation.

The eventual goal is to achieve new technology combining stronger security with higher performance.

Study on Decentralized Management of Large-scale Sensor Networks Utilizing Biological Mechanisms

Grant-in-Aid for Scientific Research (B)

Principal investigator: SATOH Ichiro

Professor, Information and Society Research Division

In the natural world, there are phenomena where the oscillations of multiple entities are autonomously synchronized, such as the expansion and contraction of cardiac muscles and emission of light by fireflies. In this study, we consider regular measurements from multiple sensor nodes making up a sensor network as oscillations at each node. The measurement cycles of the sensor nodes will be aligned through decentralized control, utilizing the mechanism of biological oscillation synchronization. We propose a method to improve the measurement quality of sensor networks, by either aligning the measurement timing (equivalent to phase) of multiple nodes within the synchronized cycle (simultaneous multiple measurement), or by shifting the measurement timing evenly (improving time granularity). We will perform simulations and implement this method using an actual sensor network to clarify its effectiveness and efficacy.

Current Landscape of Overlay Services and their Impact on the Use of Preprints

Grant-in-Aid for Scientific Research (C)

Principal investigator: NISHIOKA, Chifumi

Assistant Professor, Digital Content and Media Sciences Research Division

With the spread of Open Science, there is a growing movement towards making preprints (versions of papers to be published in academic journals before they have been peer reviewed) available on preprint servers. Overlay services, which provide academic authentication such as peer review for preprints, have started to become available. This study will systematically organize these overlay services based on the form of academic authentication and how open they are. We will look at the current state of overlay services and their acceptance in Japan and other countries. By observing changes over time in the number of citations of preprints authenticated by overlay services, we aim to quantitatively identify the impact of overlay services on the use of preprints.

Shape Estimation in Underwater Environments Using Optical Information From a Wide Wavelength Range

Grant-in-Aid for Early-Career Scientists

Principal investigator: ASANO, Yuta

Assistant Professor, Digital Content and Media Sciences Research Division

A non-destructive, non-invasive, and non-contact method of obtaining 3D data on ocean floor shape, depth, and marine life is of top importance for investigating underwater and marine resources. Previously, in order to acquire high-precision and high-resolution 3D data, methods have been developed to measure depth using spatial features in images and features of the travel time and phase of light. However, since these methods were generally based on the assumption of acquiring images in air, they cannot be directly applied to underwater environments, where light is absorbed, scattered, and refracted, which means there are various constraints. The aim of this research is to develop a non-destructive, non-invasive, non-contact and extensive, high-resolution, and high-accuracy technique for shape estimation in underwater environments by comprehensively analyzing information from a wide wavelength range, using the effects of the physical phenomenon of light, previously considered an obstacle to analysis.

Understanding Finger-Braille Interaction

Grant-in-Aid for Scientific Research (B)

Principal Investigator: BONO, Mayumi

Associate Professor, Information and Society Research Division

The purpose of this study is to shed light on the transmission and comprehension mechanisms of finger-Braille communication.

The project aims at creating a research environment that enables linkage analysis and analysis of speech content, by developing a technique for transcribing and building a database of finger-Braille interactions.

People with deafblindness are affected by visual and auditory impairments.

Finger-Braille is a means of communication used principally by persons with "blind-first deafblindness," who first lose their sight and later their hearing.

In the finger-Braille method, six fingers of the person with deafblindness (index to ring fingers of each hand), which are likened to the six keys on a Braille typewriter, are tapped directly ("Tokyo Deafblind Association" website).

In this study, conversational and interactional analyses are performed on finger-Braille dialogue data that have already been recorded.

To enable these analyses, it was essential to develop a method of transcribing the interaction by matching the positions of the finger-Braille strokes to the speech occurring simultaneously.

The analysis results obtained with this method will be shared with the deafblind community.

The possibility of extending this line of research will be examined using the method of party research.

Summary of NII 2024

Summary of NII 2024 NII Today No.104(EN)

NII Today No.104(EN) NII Today No.103(EN)

NII Today No.103(EN) Overview of NII 2024

Overview of NII 2024 Guidance of Informatics Program, SOKENDAI 24-25

Guidance of Informatics Program, SOKENDAI 24-25 NII Today No.102(EN)

NII Today No.102(EN) SINETStream Use Case: Mobile Animal Laboratory [Bio-Innovation Research Center, Tokushima Univ.]

SINETStream Use Case: Mobile Animal Laboratory [Bio-Innovation Research Center, Tokushima Univ.] The National Institute of Information Basic Principles of Respect for LGBTQ

The National Institute of Information Basic Principles of Respect for LGBTQ DAAD

DAAD